In 1988, the cognitive theorists Fodor and Pylyshyn proposed that the brain builds complex representations, such as sentences, from simple symbols. According to this framework, cognitive architecture fully explains how one could interpret and understand a sentence never seen before. The basic parts of the brain, and the interactions between these parts, enable native speakers of a language to rapidly decompose and construct complex sentences — even Chomsky’s grammatically correct but nonsensical sentence, “colorless green ideas sleep furiously.”

Psychologist Gary Marcus later proposed that specialized brain regions are analogous to data registers in computers, storing and updating the symbols that are manipulated in various mental operations. For the linguist, the data register hypothesis is a promising research avenue. These symbol-level brain regions would explain how the agent (who did it?) and patient (to whom was it done?) of a sentence could be flexibly combined to encode higher-level meaning.

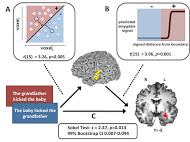

Harvard researchers Steven Frankland and Joshua Greene may have uncovered a key insight into how the brain encodes complex sentences by first learning how the brain encodes the simple question, “who did what to whom?” In the study, participants were shown sentence pairs describing events in either active or passive voice. The roles of agent and patient were then switched to produce sentences that could differ in either deep structure or surface structure — the former refers to the meaning that the sentence conveys, while the latter refers to the means of conveying an idea. “We are interested in how the brain deconstructs the underlying meaning of a sentence, not the way that the sentence was encountered by participants,” Frankland said.

After each sentence was shown, the subjects’ neural activity was mapped on small regions of roughly one million neurons. Frankland looked for regions of increased blood flow and neuron firing to map neural activity as a function of time. Distinct groups of neurons in a brain region known as the left mid-superior temporal cortex (lmSTC) responded with characteristic patterns of activity when the agent and patient roles of the sentence pairs were switched, while changing the sentence phrasing had no observable effect. “Linguists, philosophers, and computer scientists believed abstract variables must be explicitly represented, and this study offers direct evidence for a particular way that the brain encodes these variables in the process of comprehending sentences,” Frankland said.

The pattern of activity in the lmSTC predicts responses in other brain regions, including the amygdala. The defined pathway from stimulus to lmSTC to amygdala may explain how the role of agent and patient is interpreted in emotional processing. In light of research linking the temporal, parietal and frontal cortices to semantic processing in higher-level sentence processing, it is plausible that the lmSTC is used by other neural systems to construct and interpret complex sentences. Much like the data registers of computers, the lmSTC may store sentence-level variables in a temporary memory buffer, allowing other areas of the brain to access the current agent or patient of a sentence.

Frankland’s and Greene’s study provides evidence that the lmSTC represents the agent and patient variables of sentences and can be used to predict emotion-related amygdala response. This claim builds on existing literature, demonstrating that sentence-level variables are encoded by distinct neural populations.

Future research may elaborate on how complex sentences are generated. “We do not really know how sentences with multiple agents and patients, and more complicated clause structure are encoded, or how the brain represents the idiosyncratic meaning of each sentence,” Frankland said. But he is optimistic. “It could be the case that the lmSTC forms skeletal semantic representations for other brain regions that are involved in computing specific sentence meanings,” Frankland added.

Ongoing work may uncover the neural mechanism for forming complex sentences from simple elements, and could answer the long-standing question of how syntax physically manifests in the brain.